(not terribly short or dishevelled this time)

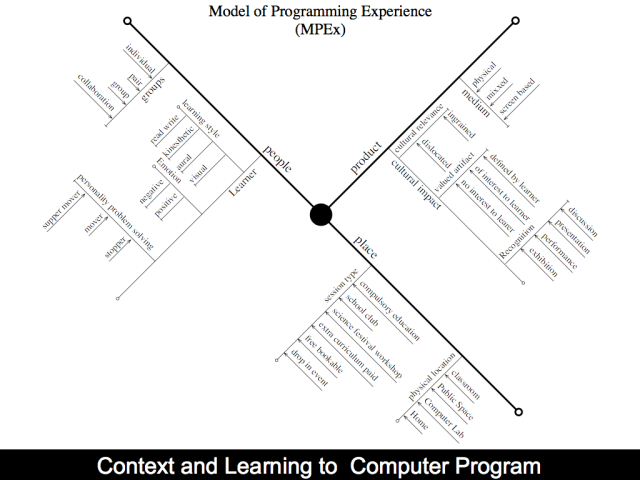

Assessment of computer programming tends to be based upon product rather than process: a student’s competence in programming is commonly measured via the final code produced. However it is also important to encourage students to reflect on the software development process and hone the skills they need whilst producing code. Particularly it is important to teach the act of programming and avoid dependency on any one given language: throughout their careers, computing professionals need the ability to learn new and emerging languages. For students to be autonomous in their learning, they need to be equipped with self-reflection and analysis skills and encouraged to take a deep approach to their learning. This paper discusses two techniques used in an introductory computing course to develop students’ reflective practice: (i) triadic assessment (Gale et al, 2002) of weekly deliverables via group work and (ii) a student generated multiple-choice class test. The design and evaluation of each technique is described and discussed.

Triadic assessment of group work

Group work is a common component in many undergraduate modules in computing as well as in other disciplines. There are sound practical and pedagogical reasons for creating this learning experience (Thorley & Gregory, 1994). However, assessing group work can be challenging, particularly in a situation where different group members assume different roles and responsibilities within a group. For instance, what is an equal share of work? How do you weigh up design input against technical contributions? One technique to encourage students to address these questions and to take a lead role is peer assessment. Its benefits include a rich engagement and understanding of the assessment process (McDermott et al, 2000). The learner is also encouraged to engage in higher cognitive skills (Fallows and Chandramohan, 2001) and critical evaluation (Anderson et al, 2001).

Motivation for Group Work

Professional software developers commonly work in teams, whether employed in fledgling start-ups or in multinational companies. The stereotype of the lone ‘geek’ absorbed in the act of programming, with little input from others, is a misconception. For this reason. the skills associated with working in a team are vital to a successful career in software development. Team projects are therefore commonplace among undergraduate computing degree programmes, but they can be problematic. The social skills required to manage workload distribution, to schedule team meetings and to deal with different levels of ability can be challenging to students.

In the context of computing, it is likely that a team project will involve the development of a specified piece of software in response to a brief or consultation with a client. With this approach, team working skills are placed in a motivating context. However this can present a challenge to academic staff assessing the resultant coursework. Assessing the products against the learning objectives identified is straightforward; allocating a mark for each of the team members for the process performed can be contentious.

One approach is to treat the team as a whole and give the same mark to each team member. This places pressure on the team to function: if one member fails to deliver, the whole team suffers. Unfortunately it also presents the opportunity for students to ‘hide’ from individual assessment. There is a risk that weak performances by students may be identified only by individual summative assessment and may not by on-going formative assessment. Providing each student with an individual mark that reflects their contribution to the project would appear to be the fairest approach. Defining contribution then becomes important. Over the course of many weeks of creative thinking, designing, implementing, crafting and refining, the key questions remain – who did what? What was it worth? What grade should each person get?

Peer assessment benefits and issues

The practice of students taking an active role in their assessment has shifted from the “enthusiastic innovators” to wider practice (Raadt et al, 2007). Topping et al (2000) define peer learning to be: “an arrangement of peers to consider the level, value, worth, quality or successfulness of the products or outcomes of learning of others of similar status”. Peer assessment has been applied widely in areas including: educational psychology (Topping et al, 2000), teacher training (Sluijsmans et al, 2002), electrical engineering (McDermott et al, 2000), and computer science (Sitthiworachart and Joy, 2004; Hamer et al, 2009).

Peer assessment can be used formatively or summatively for individual or group work. Kennedy (2005) described the application of a peer assessment process when distributing a ‘group mark’ between group members, highlighting a number of issues including: the ability of students to objectively assess team members’ contributions, marginalisation of weaker students, and the tension generated by peer review. Kennedy reported large standard deviations in peer assessed marks indicating there was little consensus among students on what criteria are being assessed. He also asserted: “the task of assessment is the responsibility of the instructor. Students ought not to be placed in a position where they can influence their own grades or those of their peers” (Kennedy, 2005). This presents a fairly strong opinion about the role of the student with respect to assessment.

Within the School of Computing in the University of Dundee, one style of peer review process adopted involves each team member anonymously submitting a review form to award a mark to each of their team members. This enables a weighting and distribution of the ‘group mark’ to each of the individuals; the effect of the review process is capped at one grade to manage extreme reviews. This process suffers from some of the problems identified by Kennedy (2006) but has value in identifying problems with teams and disproportionate contributions. The benefits of peer assessment include the ability to reflect and critically appraise work similar to that which the reviewer is producing (Sitthiworachart and Joy, 2004). Nonetheless two associated issues to be resolved when using peer assessment relate to depth and influence. Firstly, there can be a problem with the summative reflection about contributions to a substantial piece of coursework if the assessment criteria are too shallow. Secondly, social standing in groups has been observed to have an impact on peer assessment, such as when a group marks up a weakly-performing classmate. The next section describes an approach to enhancing peer assessment designed to address the shallowness issue and the social standing issue whilst retaining the value of reflection.

Peer assessment in the ‘Data Visualisation’ module

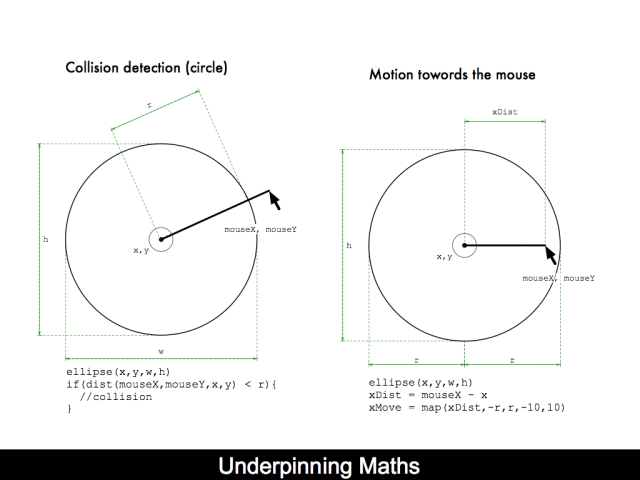

Data Visualisation is an introductory undergraduate module that draws together a number of key curriculum areas. Human Computer Interaction Design comprises a set of skills and knowledge that enable students to build interactive computer systems that fit the needs of users. Consideration of social, legal and ethical issues are essential when working with users and developing technology that may have an impact on wider society. These are learned in the context of 2D graphics and data visualisation project work. By pulling these together with an appropriate scenario, students can learn in an environment in which they are applying their emerging skills to tell stories with data and communicate information to specific people with specific needs.

This module runs in semester one and for many students it may be their first experience of computer programming. Learning to program is problematic for many and hence a tight feedback cycle is desirable. For this reason in 2011 the Data Visualisation module was designed with a weekly piece of coursework, to enrich the learning experience. The weekly coursework activity is triadically assessed (Gale et al, 2002). This involves three distinct complementary phases. (i) self-reflection: the learner is encouraged to reflect on the item of course work. (ii) peer review: the learner is required to critically appraise the work of their peers offering constructive feedback. (iii) tutor feedback: is the last stage of the process. The tutor is in able to consolidate the assessment process by offering feedback and endorsing a final grade. Taken together, these offer opportunities for rich and varied feedback.

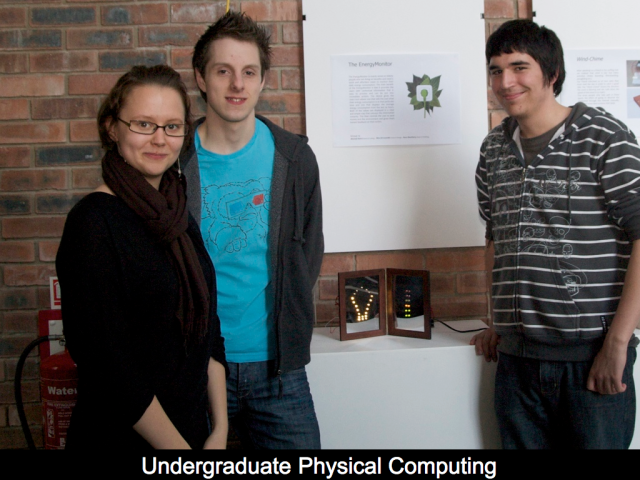

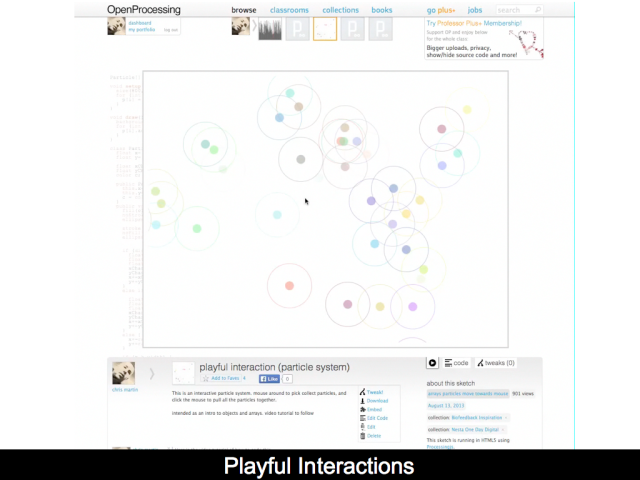

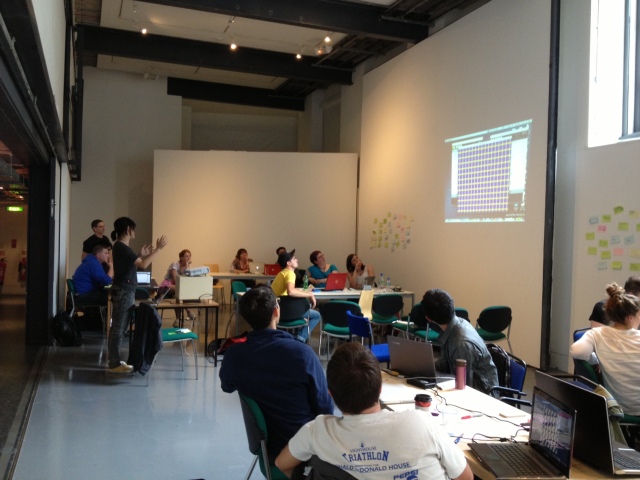

Assessment begins with the issue of a team assignment and the allocation of review groups (Thursdays). This takes place following a two hour block of teaching in the morning and precedes the afternoon lab session. In the lab the teams have some protected time to work together on the assignment. Students are supported by the module tutor and lab tutors (students who studied the module the year before). Following the lab, time is allocated for individual contributions and refinement prior to submission at 12 noon the following Tuesday. Coursework is submitted to an online community website (www.openprocessing.org). This website allows the viewing of ‘sketches’1 submitted and comments to be left below a ‘sketch’. At the point of submission, each team member is required to comment on what they have contributed and what they have learned in undertaking the piece of coursework. This is the first part of the triadic assessment: self-reflection. Each person is also required to view and comment on the work uploaded by the other teams in their review group.

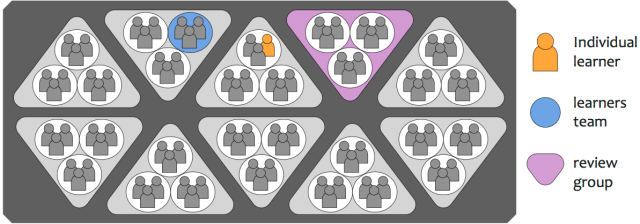

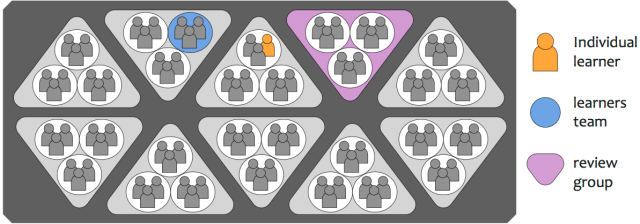

The assessment process is then pulled together in the Thursday review session. For the last 30 minutes of the teaching block the class arrange themselves into review groups each comprising three teams (depicted as triangles in Figure 2). Each team’s work is briefly presented and discussed by the review group before the group arrives at a mark and provides some qualitative feedback, which is recorded on paper form. At the end of this review session, each review group reports back briefly to the entire class, sharing the highlights of what has been discussed, including exceptional work and lessons learned. This is the second part of the triadic assessment: peer review. The final stage each week is for the tutor to review mark sheets completed by the review group against the sketch submitted and award a mark and comment for the coursework. This is the third part of the triadic suite: tutor feedback.

Figure 1 – Arrangement of teams and review groups

The module requires a lot of preparation, presenting and group discussion of peers’ work. At the outset, clear guidelines are given on the expectations of students participating in these activities. When commenting on peers’ work, they are encouraged to adopt a “two stars and a wish” structure to the feedback: identify two strengths and one thing that could be improved. Asking questions and giving comments are highlighted as indications of interest and engagement in ideas being presented. Constructive criticism indicates that a reviewer has thought about the work sufficiently to form an opinion and to identify areas for extension. Learning to take criticism as a compliment is an important skill. This encourages students comments criticism and peer grading to be less based on social standing in the gorup and more grounded in the work being reviewed.

Evaluation of triadic assessment

At the beginning of the following semester, feedback was obtained from students about the module as a whole. Students were given 18 statements each with a 40 millimetre line next to it to graphically depict a spectrum from strong agreement (0) to strong disagreement (40). There were 36 responses. The following three statements were relevant to assessment:

“I found the weekly discussions about coursework with other groups interesting.”

The mode response was 5 (out of 40), indicating strong agreement. In more detail, 66% of students agreed, 5% were neutral and 20% disagreed.

“I found it motivating to have a say in my peers’ coursework marks through weekly discussions.”

The mode response was 20, indicating neutrality. In more detail, 56% of students agreed, 25% were neutral and 20% disagreed.

“I don’t believe it is within my role as a student to be involved in assessing coursework.”

The mode response was 35, indicating disagreement. In more detail, 17% of students agreed, 14% were neutral and 69% of students disagreed.

These responses are generally positive, with students broadly agreeing that it is within their role to take an active part in assessment, and more than half feeling benefit from the weekly discussion. Although the most frequent response to motivation from influence over a peer’s mark was neutrality, over half the class were in broad agreement with this statement. As a complement to the visual analogue scale questionnaire, there was an opportunity to give free text comments via post-it notes in response to one further question: “if you only had a week to do the module again, what should we keep (green post-it) and what should we lose (red post-it)?”

A sample of responses relevant to the assessment methods is given next:

[green] “Group work: love all the working in groups. Learn so much better. Good fun, much more effective. Loved the course!”

[green] “Assessing other people’s work because it gave a chance to discuss their sketch with others.”

[green] “Group work – good to have other people on your group that can help you, but that is only if your group was a good one.”

[red] GROUP MARKING

[red] Not much time to complete assignments

Reflections on triadic assessment

Considering the evaluation data and observation throughout the semester, there is evidence to suggest a triadic approach to assessment has been successful in the context of this introductory computing module. On reflection, the most successful part of the process was the 30 minute face-to-face review session. Listening to the various discussions that took place throughout the semester, it was encouraging to hear students critically appraise and defend their work. In the majority of cases when the tutor amended marks, it was to provide a higher mark than that suggested by the peer review group.

One aspect of the process engaged with to a lesser extent was the online discourse through comment threads. In several cases. there was a good degree of engagement with conversations about work posted. However the majority of the cohort quickly realised that there was little consequence for not engaging in this part of the process and failed to comment regularly. It may be that this virtual engagement is less important when there is a regular face-to-face session, or it may be that the absence of direct effect on grades resulted in it being perceived as a low priority activity. With a favourable response from students in this activity the following year we sought to engage the students further in the assessment process.

2 Student-generated assessment

The majority of the Data Visualisation module’s assessment is via practical group work. Nonetheless there is a need for an element of individual assessment to ensure students have adequately engaged in the module materials and are equipped to build on their learning as they progress through their chosen degree path. End of module exam conditions and summative assessments are typical and often involve a huge amount of work to create, evaluate and assure quality. In the 2013 Data Visualisation module, we were keen to meet the same objectives but with as great a degree of flexibility as possible, preferably engaging the students in the process of exam setting as much as possible. There are significant risks and benefits to this type of strategy and is important to stress that this was ‘breaking new ground’: work remains to be done in subsequent years.

One technique was used to punctuate long teaching blocks and to encourage students into a ‘review’ state of mind: the use of the one-minute paper (Angelo & Cross, 1993). In this, the tutor asks the students a small number of questions at the conclusion of the class to identify the key things learned and any areas of uncertainty. Deriving assessments from these student-generated questions essentially means that their reflections on their own learning lead the assessment process.

Student-generated class test

As adopted in the Data Visualisation module, five questions relating to the material being taught were posed at the end of teaching various key concepts. Students were given a few minutes to write their responses on an answer sheet. They were then given several more minutes to discuss their answers with their neighbours. Finally the questions were discussed by the group as a whole and answers offered by the students. Answer sheets were also collected for brief review by the module tutor, who gained a sense of what understanding the class had at that stage. The main purpose of the activity is to get people thinking and resolve any ambiguities about key points of the session.

This change of activity proved to give a valuable disruption at several points throughout a three-hour block of teaching. To create an authentic individual assessment for the end of the module, we adapted this approach further. Initially it was made an out-of-class activity to assist students in their revision. Students had to write and submit their own revision questions and produce their own answers. The number of questions submitted in this form was disappointing so next we moved it to take place during each teaching block. As the semester progressed, we increased this to several times throughout the teaching block: at various points students were required to generate a multiple choice question that they felt was relevant to the current topic being discussed. To create a question is more demanding than just to provide an answer and this encouraged a similar review state to the minute paper techniques used previously.

The actual implementation of this student-led assessment relied on paper forms that students filled out in class. They were required to provide their recommended answers in addition to the questions. One technical platform that would potentially provide the functionality required is PeerWise (Denny et al, 2008) this will be explored in future years. The decision not to take a computer based solution (which would massively speed things up) was rejected for the following reasons. Over the past five years there has been a massive increase in the number of laptops and smart phones in the lecture theatre. Indeed we can be confident that when assembling review groups (3 groups of 3 students) there will be at least one laptop, allowing the group to browse the internet. Requiring all students to have internet access in a lecture does risk marginalising some students. There is also a degree of simplicity and clarity from a simple pen and paper activity. There are also advantages to a tangible collection of questions. However as the semester progressed we collated an ever-enlarging folder of questions visible in the undergraduate labs that contained all the questions created so far. This allowed students to get a feel for what their peers were asking and what the class test may look like.

Finally, all questions and answers were verified by module tutors and a class test produced from this set of student-generated questions, comprising 60 multiple-choice questions. The module covers 6 broad topic areas and a sample of 10 questions from each topic was picked.

Evaluation

The number of questions received varied form topic to topic. The greatest number of responses (43) was from the first topic covered: ‘Introduction to Processing’. The smallest number of responses (12) was from a topic in mid semester: ‘Interaction and Transformation’. The mean response was 21 questions per week which from a class of 70 leaves room for improvement. Increasing engagement and improving response rates from students is an area requiring further attention. There is potential to increase the amount of feedback students are receiving with regard to their questions as the current model is not very responsive. In practice this resulted in around 20% of the questions in the final test not being generated by the students. In future years the previous year’s bank of questions will be used to meet any shortfalls. The cohort presented with a wide range of abilities and this is reflected in the questions offered: this allowed for a good range of difficulties in the final paper.

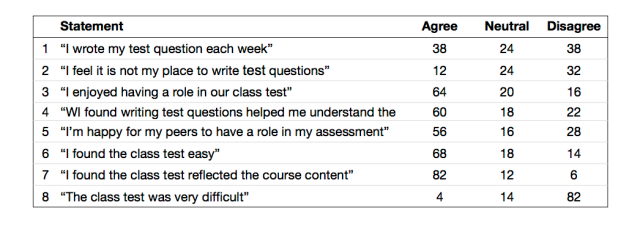

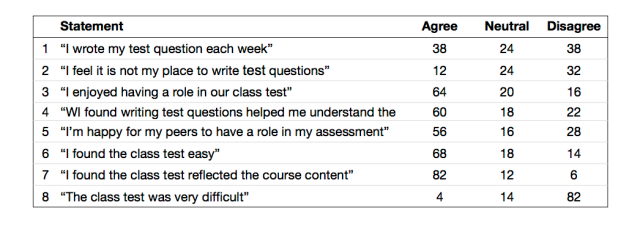

The test was well received by students and resulted in a mean test score of 66%. An evaluation took place at the end of the course using Visual Analogue Scales (VAS) (Cowley & Youngblood, 2009) to discern agreement or disagreement with a number of statements relating to the class generated test the results are in table 1.

Table 1 – Evaluation of class test results (all values are percentages) n=50

It is very encouraging that 82% of respondents felt the class test reflected the course content. One of the key motivations for this approach to a summative end of module assessment was to ensure authenticity. It is also encouraging to see broad agreement with statements relating to assessment being within the role of the students. 60% of students found the act of writing questions helpful in assisting their understanding.

The low level of response and open admission to this in the evaluation remain problematic. With large class sizes and little resource to support these activities, an approach of incentivisation must be taken. The current model gives students a good chance that they will be presented with one of their own questions in the test. This may be further enhanced by a greater level of feedback throughout the process. The difficulty of the test is also an interesting challenge. This should in theory be self managing, since students provide questions at a level that challenges them. In practice the survey responses indicate the test did not present sufficient challenge. It is important to interpret results as student perceptions. The class test was externally moderated to insure appropriate level but perceived to less challenging. The importance of the final result may also be balanced against the degree of engagement the students have had. With this model of assessment the final test and resultant grade is only a part of the process. The process of question generation serves to enhance the learning opportunity offered significantly.

Reflections on student-generated assessment

This student-generated assessment system worked well and resulted in a genuine final summative assessment that reflected the learning students had engaged in. Reviewing the final paper against the module specification, the questions generated did fit well with the intended learning outcomes. The potential benefits of an electronic system are great and this will be explored in future. One of the biggest issues with the paper-based system was the inability to readily give quick feedback if a question was poorly formed or presented with an incorrect answer. In several cases, the only way to achieve this was when speaking to the class as a group. An online system would also offer the ability for students to review and comment on questions, making attaining this degree of engagement from students difficult.

Conclusion

Assessment is a crucial part of learning. Students often see this as an intimidating, external mechanism designed to match their abilities to a number which ranks them in the class. This can be one purpose of assessment but only a small part of what it can achieve. Bringing the student to the heart of assessment has the potential to improve a broad range of skills, and ensures they understand and are empowered to take ownership of their learning. The peer review process described in this paper supports the process with a number of levels of engagement, from online commenting to face-to-face discussion. It has been a positive experience that has given our students the opportunity to obtain feedback (input and reflection) from assessors closer to their level of expertise and who have faced similar challenges. Placing an end-of-module summative assessment in the hands of the student cohort has also been valuable. There was no evidence of students gaming the system: the questions they produced reflected well on their interpretation of the teaching materials and clearly resonated with the groups. It has encouraged students to produce a valuable self-reflective perspective on their own work.

References

Anderson, L W, Krathwohl, D R, Airasian, P W, Cruikshank, K A, Mayer, R E & Pintrich, P, R (2001) A Taxonomy for Learning, Teaching and Assessing. A Revision of Bloom’s Taxonomy of Educational Objectives. Theory Into Practice, Volume 41, Number 4Addison Wesley Longman, Inc.

Angelo, T A & Cross, K P (1993) Classroom Assessment Techniques, 2nd ed., Jossey-Bass, San Francisco

Cowley, J A, & Youngblood, H (2009) Subjective response differences between visual analogue, ordinal and hybrid response scales, Proceedings of the Human Factors and Ergonomics Society Annual Meeting, Sage Publications

Denny, P, Hamer, J, Luxton-Reilly, A & Purchase, H (2008) PeerWise, In Proceedings of the 8th International Conference on Computing Education Research (Koli ’08). ACM

Fallows, S & Chandramohan, B (2001) Multiple Approaches to Assessment: reflections on use of tutor, peer and self-assessment, Teaching in Higher Education, vol 6 no 2, pp 229-246

Gale, K, Martin, K & McQueen, G (2002) Triadic Assessment, Assessment & Evaluation in Higher Education, Taylor & Francis Ltd, Vol. 27, no. 6, pp 557-567

Hamer, J, Purchase, H C, Denny, P, and Luxton-Reily, A (2009) Quality of Peer Assessment in CS1, 5th International Workshop on Computing Education Research, 10-11 Aug 2009, Berkeley, CA, USA

Kennedy, G J (2005) Peer-assessment in Group Projects: Is it worth it?, Seventh Australasian Computing Education Conference (ACE2005), Newcastle, Australia

McDermott, K J, Nafalski, A & Gol, O (2000) Active Learning in the University of South Australia, 30th Annual Frontiers in Education Conference (FIE 2000), 18-21 October 2000, Kansas city, Missouri

Raadt, M D, Lai, D & Watson, R (2007) An evaluation of electronic individual peer assessment in an introductory programming course, In Proceedings of the 8th International Conference on Computing Education Research (Koli ’08). ACM

Sitthiworachart, J & Joy, M S (2004) Effective Peer Assessment for Learning Computer Programming, 9th Annual Conference on Innovation and Technology in Computer Science Education (ITiCSE 2004), 28-30 June 2004, Leeds, UK

Sluijsmans, D, Brand-Gruwel, S & Merrienboer, J (2002) Peer Assessment Training in Teacher Education: Effects on performance and perceptions, Assessment and Evaluation in Higher Education, vol 27 no 5, pp 443-454

Thorley, L & Gregory, R (1994) Using Group-based Learning in Higher Education, Kogan Page

Topping, K J, Smith, E F, Swanson, I and Elliot, A, (2000) Formative peer assessment of academic writing between postgraduate students, Assessment and Evaluation in Higher Education, vol 25 no 2, pp 149-169